What follows below are the abstracts for two papers that were accepted for presentation this spring at two respective conferences in Florence and in Glasgow. Due to the dangers posed by the coronavirus pandemic early in the year, both of these events – like thousands of other conferences and gatherings around the world – have been canceled. We can hope that these will be rescheduled in the near future and I will be sure to report here and on JDB1745’s social media accounts when and if new dates are chosen. More important than any conference, of course, is our collective health, and the entire JDB team hopes that yours remains or once again becomes robust.

Both of these papers stem from my extended work on the nature of plebeian Jacobite culture through the collection and analysis of large-scale prosopographic data compiled from archival and published sources. Please take care of yourselves; we have much to discover together!

Yours,

Darren

Fifth Colloquium of the Jacobite Studies Trust

Middlebury School, Florence

22-24 May 2020 (Canceled)

By Hook or by Crook

A Modern Reassessment of Jacobite Impressment

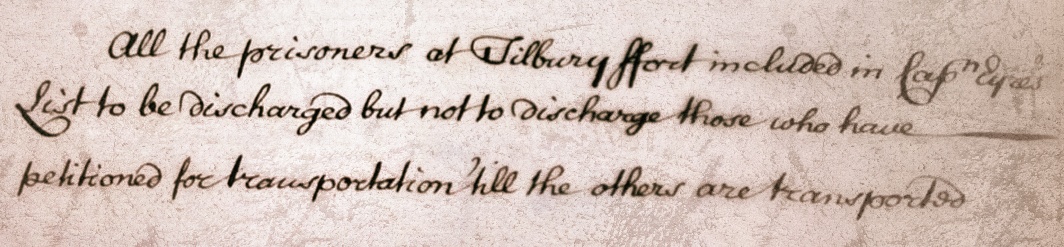

Little scholarly debate surrounds the ubiquitous tale of recruits being forced to join and fight in the Jacobite armies during the 1715 and 1745 risings. The general historiography of the later Jacobite era flatly consigns the widespread prevalence of impressment tactics to the status of myth, or otherwise marginalises claims of forcible recruitment as simply a means to evade punishment. Many of these assertions, however, are built upon incomplete, published transcriptions of prisoner lists or without the extensive analysis of large bodies of archival case records. This paper addresses some missed opportunities by considering the context and process of the British penal system in the mid-eighteenth century to re-examine how cases of impressment at Jacobite trials were handled and how they were resolved. Using a prosopographic approach to collect, analyse, and track hundreds of impressment accounts juxtaposed with primary-source evidence that illustrates the nature of Jacobite recruitment through the final campaign in 1745-6, a modern, data-driven reassessment will be presented. This study will also consider the role of the Scottish Presbyterian clergy in vouching for those claiming force, as well as the distinction of Jacobite recruiting tactics compared to other coeval eighteenth-century European armies. The results in total provide some fresh perspectives about the popularity of Jacobitism in its final stage and what that meant to the ‘legitimacy’ and effectiveness of the cause.

Outlander International Conference

University of Glasgow

2-6 June 2020 (Canceled)

‘What Makes Heroic Strife?’

Practical Jacobitism and its Burial at Culloden

The early Outlander novels and television episodes are set against the dramatic background of the final Jacobite rising in Britain and they portray both the movement and its adherents in an uncomplicated and decidedly alluring manner. An entirely new audience has therefore been exposed to the concept of Jacobitism and its place within a larger historical context. But what did it really mean to be a Jacobite in the mid-eighteenth century and how accurately are the common people characterised in the world of Outlander? This presentation digs a bit deeper into the historical reality of the Jacobite ‘cause’ and specifically examines the conflict between ideology and practice and the crossroads between them as exemplified on Culloden Moor in April of 1746. Was it only the hopes of a Stuart restoration that died with the hundreds of Jacobite soldiers on that bleak spring day, or something far greater? Dispelling the myths and casting light on the realities of popular Jacobitism using the latest research on motivational agencies of the numerous Jacobite causes, this brief paper explores the experience of the common soldier against the backdrop of a calamitous civil war.

Darren S. Layne received his PhD from the University of St Andrews and is creator and curator of the Jacobite Database of 1745, a wide-ranging prosopographical study of people concerned in the last rising. His historical interests are focused on the mutable nature of popular Jacobitism and how the movement was expressed through its plebeian adherents. He is a passionate advocate of the digital humanities, data and metadata cogency, and Open Access.